Java Standard Edition (JSE) 9 (‘Java 9’) was finally completed and publicly released at the end of Sept 2017. This post contains a brief overview of what’s new in Java 9, and outlines the main reasons why you or your business might consider upgrading, if at all. It also provides developers with a link to a set of code examples that I’ve produced, which showcase the major new language features in Java 9 and explains each of them in more detail.

Author Archives: neiljbrown

Evaluating message brokers – Amazon SQS

Message brokers are specialised pieces of enterprise middleware designed to support integrating applications in a decoupled (in both time and location) manner, using messaging channels, implemented as either queues (for single consumer) or topics (for multiple consumers). Whilst deploying and operating a broker has an additional, ongoing cost for a business, if the scale of integration in your system and the non-functional requirements warrant it, they can can provide a flexible, better performing and more scalable solution than the alternative of implementing message queues in your database, especially, as is often the case, the latter is already overloaded. There are a considerable number of proven message brokers available today from a variety of vendors. Before committing to building your integrations on a particular broker, you should give careful consideration to how well it satisfies your requirements. I recently went through such an evaluation exercise, and ended-up choosing Amazon Simple Queue Service (SQS). While we haven’t regretted this decision, I did learn a few things along the way. In this post I’ll share a list of the functional and non-functional (technical) requirements that you should consider as part of evaluating a message broker, and also my opinion on how SQS measures up to other brokers in each case, and the trade-offs.

There are a considerable number of proven message brokers available today from a variety of vendors. Before committing to building your integrations on a particular broker, you should give careful consideration to how well it satisfies your requirements. I recently went through such an evaluation exercise, and ended-up choosing Amazon Simple Queue Service (SQS). While we haven’t regretted this decision, I did learn a few things along the way. In this post I’ll share a list of the functional and non-functional (technical) requirements that you should consider as part of evaluating a message broker, and also my opinion on how SQS measures up to other brokers in each case, and the trade-offs.

Message Broker or Bus – what’s the difference?

In the process of evaluating and trialling the introduction of messaging to integrate distributed back-end services, and other apps, in our system, the question of whether we were aiming to design a Message Broker or a Message Bus arose. This led to me doing some research to verify and improve my understanding of the difference. This post is a summary of my findings.

Improving Log Aggregation & Search for Application Services

When my team and I first took on responsibility for modernising our business’ backend application services, we found it was hard to obtain a full picture of the events that were being logged by services, in a timely manner. Logging of events are an essential source of data for monitoring how a service is operating, and also to support troubleshooting of problems e.g. the no. and rate of failed API requests, and supporting failure diagnostics. Therefore, one of the first tasks we prioritised as a (devops) team was to improve this situation by provisioning a modern log aggregation and search solution. This post outlines the problems we addressed, the architectural pattern we used as the basis of the solution, the tools we used to implement the pattern, and the resulting benefits.

Designing message consumer error handler for Amazon SQS

When building a message consumer you need to handle the various errors that will inevitably occur during message processing. If you’re using Amazon SQS as your message broker it provides some built-in support for error handling that you can utilise. But, if you want to handle all types of errors efficiently you should also add your own custom error handler. This post explains how you can do both these things, including how to classify message processing errors.

JSON Schema Part 2 – Automating JSON validation tests

In my first post on the topic of JSON Schema I outlined the limitations of specifying data-formats by example, described how schema languages help address this, and provided an introduction to the not so well known schema language for JSON – JSON Schema.

In that first article I stated my intention to document non-trivial JSON data-format I specified in the future using JSON Schema in addition to using specification-by-example, whether it be for an API resource or a message payload. When you adhere to this you quickly realise that while producing a JSON Schema is valuable, to increase the value you’ll additionally want to extend the tests for your JSON producing components to automate the following:

- Test the schema remains valid – i.e. it’s well-formed and conforms to the JSON Schema spec.- in order to avoid publishing a broken or invalid schema.

- Test your app produces JSON that conforms to the published schema. This goes beyond just asserting that the component produces an expected lump of JSON, to additionally check that the generated JSON conforms to any validation constraints in the schema.

This follow-up, second post on JSON Schema, provides example code for implementing the above such tests using Java, JUnit and an open-source library for JSON Schema Validation.

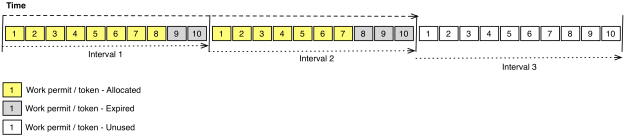

Managing the Rate of Message Consumption

This is a short post on a couple of approaches to managing the rate of message consumption, their differences and how to choose between them.

Introducing JSON Schema

XML has a supporting schema-language in the form of the XML Schema. What you may be unaware of is that JSON has one too.

Read-on to find out more and why you might want to use it if you’re not already.

This is the first of two articles on the topic of JSON Schema. The second article (link at the end of this one) covers the topic of automating testing of JSON Schema form a couple of angles.

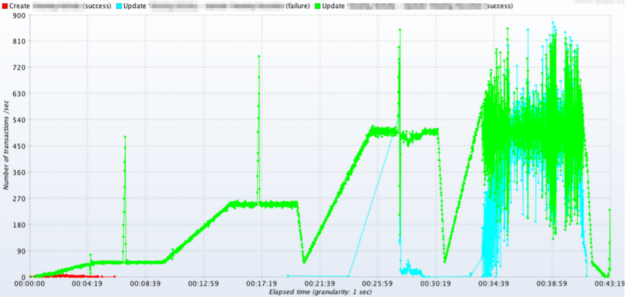

Measuring API Throughput using JMeter

As an online business grows in popularity it can highlight scalability problems in your architecture. Certain hardware and/or software components can struggle to keep up with increased demand and it may not be easy or cost-effective to address this by extending them in their present form, especially when the demand is spiky in nature. My team was recently faced with such an issue. We decided to engineer our way out of the problem by designing a more scalable replacement solution. Key technical requirements for the new solution included the throughput and latency of a public web API. In this post I share details of how you can measure these performance characteristics of an API using Apache JMeter, and in the process I identify a very useful supporting JMeter plugin.

JMeter – Throughput per second graph

Amazon API Gateway – What’s it all about?

My team and I design and build a lot of REST APIs during the course of our work, to expose functionality and data to client systems, and to integrate our back-end services with one another. I was therefore interested in learning more about Amazon’s recently launched API Gateway service. This is a short post summarising the role and major features of Amazon API Gateway based on my own preliminary research and trialling. I also briefly cover what the service is not designed to support, and consider possible future use-cases.